SQUID Proxy Server Monitoring with MRTG

- Get link

- X

- Other Apps

SQUID Proxy Server Monitoring with MRTG

This short reference guide was made on request by a creature called 'Humans' living on planet earth ;)

☻

This is a short reference guide to monitor SQUID various counters with the MRTG installed on Ubuntu. In this example I have configured SQUID and MRTG on same Ubuntu box.Also I ahve showed howto install Apache, mrtg, scheduler to run after every 5 minutes, snmp services and its utilities, along with mibs.

If you have Freshly installed UBUNTU , You need to install Web Server (apache2)

1

| apt-get install apache2 |

1

| apt-get install mrtg |

Now we will install SNMP Server and other SNMP utilities so that web can collect information for locahost and remote pcs via snmp.

1

| apt-get install snmp snmpd |

1

2

3

| rocommunity publicsyslocation "Karachi NOC, Paksitan"syscontact aacable@hotmail.com |

now edit /etc/default/snmpd

and change following

1

2

3

4

5

6

7

| # snmpd options (use syslog, close stdin/out/err).SNMPDOPTS='-Lsd -Lf /dev/null -u snmp -I -smux -p /var/run/snmpd.pid'To THIS:# snmpd options (use syslog, close stdin/out/err).#SNMPDOPTS='-Lsd -Lf /dev/null -u snmp -I -smux -p /var/run/snmpd.pid 'SNMPDOPTS='-Lsd -Lf /dev/null -u snmp -I -smux -p /var/run/snmpd.pid -c /etc/snmp/snmpd.conf'\ |

1

2

3

| /etc/init.d/snmpd restartORservice snmpd restart |

1

| sudo apt-get install snmp-mibs-downloader |

1

2

3

4

5

| mkdir /cfgmkdir /cfg/mibscp /var/lib/mibs/ietf/* /cfg/mibscd /cfg/mibs |

Format that will be used in cfg file

E.g:

LoadMIBs: /cfg/mibs/squid.mib

↓

Testing SNMP Service for localhost.

Now snmp service have been installed, its better to do a snmpwalk

test from localhost or another remote host to verify our new

configuration is responding correctly. issue the following command from

localhost terminal.

1

| snmpwalk -v 1 -c public 127.0.0.1 |

and you will see lot of oids and information which confirms that snmp service is installed and responding OK.

As showed in the image below …

.

..

.

.

Adding MRTG to crontab to run after very 5 minutes

To add the schduler job, first edit crontab filecrontab -e

(if it asks for prefered text editor, go with nano, its much easier)

now add following line

1

| */5 * * * * env LANG=C mrtg /etc/mrtg.cfg --logging /var/log/mrtg.log |

Some tips for INDEX MAKER and running MRTG manually …

Following is the command to create CFG file for remote pc.cfgmaker public@192.168.2.1 > /cfg/proxy.cfgFollowing is the command to check remote pc snmp info

snmpwalk -v 1 -c public /cfg/192.168.2.1Following is the command to create index page for your cfg file.

indexmaker /etc/mrtg.cfg –output /var/www/mrtg/index.html –columns=1 -compactFollowing is the command to stat MRTG to create your graph file manual. You haev to run this file after every 5 minutes in order to create graphs.

env LANG=C mrtg /etc/mrtg.cfg.

.

.

Now LETS start with SQUID config…

SQUID CONFIGURATION FOR SNMP

Edit your squid.conf and add the following

1

2

3

| acl snmppublic snmp_community publicsnmp_port 3401snmp_access allow snmppublic all |

Now use following proxy.cfg for the squid graphs

.

.

proxy.cfg

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

302

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

346

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

364

365

366

367

368

369

370

371

372

373

374

375

376

377

378

379

380

381

382

383

384

385

386

387

388

389

390

391

392

393

394

395

396

397

398

399

400

401

402

403

404

405

406

407

408

409

410

411

412

413

414

415

416

417

418

419

420

421

422

423

424

425

426

427

428

429

430

431

432

433

434

435

436

437

438

439

440

| LoadMIBs: /cfg/mibs/squid.mibOptions[_]: growright,nobanner,logscale,pngdate,bitsOptions[^]: growright,nobanner,logscale,pngdate,bitsWorkDir: /var/www/mrtgEnableIPv6: no### Interface 2 >> Descr: 'eth0' | Name: 'eth0' | Ip: '10.0.0.1' | Eth: '00-0c-29-2b-95-78' ###Target[localhost_eth0]: #eth0:public@localhost:SetEnv[localhost_eth0]: MRTG_INT_IP="10.0.0.1" MRTG_INT_DESCR="eth0"MaxBytes[localhost_eth0]: 1250000Title[localhost_eth0]: Traffic Analysis for eth0 -- ubuntuPageTop[localhost_eth0]: <h1>Traffic Analysis for eth0 -- ubuntu</h1><div id="sysdetails"><table><tr><td>System:</td><td>ubuntu in "Karachi NOC, Paksitan"</td></tr><tr><td>Maintainer:</td><td>aacable@hotmail.com</td></tr><tr><td>Description:</td><td>eth0 </td></tr><tr><td>ifType:</td><td>ethernetCsmacd (6)</td></tr><tr><td>ifName:</td><td>eth0</td></tr><tr><td>Max Speed:</td><td>1250.0 kBytes/s</td></tr><tr><td>Ip:</td><td>10.0.0.1 (ubuntu.local)</td></tr></table></div>LoadMIBs: /cfg/mibs/squid.mibPageFoot[^]: <i>Page managed by <a href="mailto:aacable@hotmail.com">Syed Jahanzaib</a></i>Target[cacheServerRequests]: cacheServerRequests&cacheServerRequests:public@localhost:3401MaxBytes[cacheServerRequests]: 10000000Title[cacheServerRequests]: Server Requests @ zaib_squid_proxy_serverOptions[cacheServerRequests]: growright, nopercentPageTop[cacheServerRequests]: <h1>Server Requests @ zaib_squid_proxy_server</h1>YLegend[cacheServerRequests]: requests/secShortLegend[cacheServerRequests]: req/sLegendI[cacheServerRequests]: Requests LegendO[cacheServerRequests]:Legend1[cacheServerRequests]: RequestsLegend2[cacheServerRequests]:Target[cacheServerErrors]: cacheServerErrors&cacheServerErrors:public@localhost:3401MaxBytes[cacheServerErrors]: 10000000Title[cacheServerErrors]: Server Errors @ zaib_squid_proxy_serverOptions[cacheServerErrors]: growright, nopercentPageTop[cacheServerErrors]: <h1>Server Errors @ zaib_squid_proxy_server</h1>YLegend[cacheServerErrors]: errors/secShortLegend[cacheServerErrors]: err/sLegendI[cacheServerErrors]: Errors LegendO[cacheServerErrors]:Legend1[cacheServerErrors]: ErrorsLegend2[cacheServerErrors]:Target[cacheServerInOutKb]: cacheServerInKb&cacheServerOutKb:public@localhost:3401 * 1024MaxBytes[cacheServerInOutKb]: 1000000000Title[cacheServerInOutKb]: Server In/Out Traffic @ zaib_squid_proxy_serverOptions[cacheServerInOutKb]: growright, nopercentPageTop[cacheServerInOutKb]: <h1>Server In/Out Traffic @ zaib_squid_proxy_server</h1>YLegend[cacheServerInOutKb]: Bytes/secShortLegend[cacheServerInOutKb]: Bytes/sLegendI[cacheServerInOutKb]: Server In LegendO[cacheServerInOutKb]: Server Out Legend1[cacheServerInOutKb]: Server InLegend2[cacheServerInOutKb]: Server OutTarget[cacheHttpHits]: cacheHttpHits&cacheHttpHits:public@localhost:3401MaxBytes[cacheHttpHits]: 10000000Title[cacheHttpHits]: HTTP Hits @ zaib_squid_proxy_serverOptions[cacheHttpHits]: growright, nopercentPageTop[cacheHttpHits]: <h1>HTTP Hits @ zaib_squid_proxy_server</h1>YLegend[cacheHttpHits]: hits/secShortLegend[cacheHttpHits]: hits/sLegendI[cacheHttpHits]: Hits LegendO[cacheHttpHits]:Legend1[cacheHttpHits]: HitsLegend2[cacheHttpHits]:Target[cacheHttpErrors]: cacheHttpErrors&cacheHttpErrors:public@localhost:3401MaxBytes[cacheHttpErrors]: 10000000Title[cacheHttpErrors]: HTTP Errors @ zaib_squid_proxy_serverOptions[cacheHttpErrors]: growright, nopercentPageTop[cacheHttpErrors]: <h1>HTTP Errors @ zaib_squid_proxy_server</h1>YLegend[cacheHttpErrors]: errors/secShortLegend[cacheHttpErrors]: err/sLegendI[cacheHttpErrors]: Errors LegendO[cacheHttpErrors]:Legend1[cacheHttpErrors]: ErrorsLegend2[cacheHttpErrors]:Target[cacheIcpPktsSentRecv]: cacheIcpPktsSent&cacheIcpPktsRecv:public@localhost:3401MaxBytes[cacheIcpPktsSentRecv]: 10000000Title[cacheIcpPktsSentRecv]: ICP Packets Sent/ReceivedOptions[cacheIcpPktsSentRecv]: growright, nopercentPageTop[cacheIcpPktsSentRecv]: <h1>ICP Packets Sent/Recieved @ zaib_squid_proxy_server</h1>YLegend[cacheIcpPktsSentRecv]: packets/secShortLegend[cacheIcpPktsSentRecv]: pkts/sLegendI[cacheIcpPktsSentRecv]: Pkts Sent LegendO[cacheIcpPktsSentRecv]: Pkts Received Legend1[cacheIcpPktsSentRecv]: Pkts SentLegend2[cacheIcpPktsSentRecv]: Pkts ReceivedTarget[cacheIcpKbSentRecv]: cacheIcpKbSent&cacheIcpKbRecv:public@localhost:3401 * 1024MaxBytes[cacheIcpKbSentRecv]: 1000000000Title[cacheIcpKbSentRecv]: ICP Bytes Sent/ReceivedOptions[cacheIcpKbSentRecv]: growright, nopercentPageTop[cacheIcpKbSentRecv]: <h1>ICP Bytes Sent/Received @ zaib_squid_proxy_server</h1>YLegend[cacheIcpKbSentRecv]: Bytes/secShortLegend[cacheIcpKbSentRecv]: Bytes/sLegendI[cacheIcpKbSentRecv]: Sent LegendO[cacheIcpKbSentRecv]: Received Legend1[cacheIcpKbSentRecv]: SentLegend2[cacheIcpKbSentRecv]: ReceivedTarget[cacheHttpInOutKb]: cacheHttpInKb&cacheHttpOutKb:public@localhost:3401 * 1024MaxBytes[cacheHttpInOutKb]: 1000000000Title[cacheHttpInOutKb]: HTTP In/Out Traffic @ zaib_squid_proxy_serverOptions[cacheHttpInOutKb]: growright, nopercentPageTop[cacheHttpInOutKb]: <h1>HTTP In/Out Traffic @ zaib_squid_proxy_server</h1>YLegend[cacheHttpInOutKb]: Bytes/secondShortLegend[cacheHttpInOutKb]: Bytes/sLegendI[cacheHttpInOutKb]: HTTP In LegendO[cacheHttpInOutKb]: HTTP Out Legend1[cacheHttpInOutKb]: HTTP InLegend2[cacheHttpInOutKb]: HTTP OutTarget[cacheCurrentSwapSize]: cacheCurrentSwapSize&cacheCurrentSwapSize:public@localhost:3401MaxBytes[cacheCurrentSwapSize]: 1000000000Title[cacheCurrentSwapSize]: Current Swap Size @ zaib_squid_proxy_serverOptions[cacheCurrentSwapSize]: gauge, growright, nopercentPageTop[cacheCurrentSwapSize]: <h1>Current Swap Size @ zaib_squid_proxy_server</h1>YLegend[cacheCurrentSwapSize]: swap sizeShortLegend[cacheCurrentSwapSize]: BytesLegendI[cacheCurrentSwapSize]: Swap Size LegendO[cacheCurrentSwapSize]:Legend1[cacheCurrentSwapSize]: Swap SizeLegend2[cacheCurrentSwapSize]:Target[cacheNumObjCount]: cacheNumObjCount&cacheNumObjCount:public@localhost:3401MaxBytes[cacheNumObjCount]: 10000000Title[cacheNumObjCount]: Num Object Count @ zaib_squid_proxy_serverOptions[cacheNumObjCount]: gauge, growright, nopercentPageTop[cacheNumObjCount]: <h1>Num Object Count @ zaib_squid_proxy_server</h1>YLegend[cacheNumObjCount]: # of objectsShortLegend[cacheNumObjCount]: objectsLegendI[cacheNumObjCount]: Num Objects LegendO[cacheNumObjCount]:Legend1[cacheNumObjCount]: Num ObjectsLegend2[cacheNumObjCount]:Target[cacheCpuUsage]: cacheCpuUsage&cacheCpuUsage:public@localhost:3401MaxBytes[cacheCpuUsage]: 100AbsMax[cacheCpuUsage]: 100Title[cacheCpuUsage]: CPU Usage @ zaib_squid_proxy_serverOptions[cacheCpuUsage]: absolute, gauge, noinfo, growright, nopercentUnscaled[cacheCpuUsage]: dwmyPageTop[cacheCpuUsage]: <h1>CPU Usage @ zaib_squid_proxy_server</h1>YLegend[cacheCpuUsage]: usage %ShortLegend[cacheCpuUsage]:%LegendI[cacheCpuUsage]: CPU Usage LegendO[cacheCpuUsage]:Legend1[cacheCpuUsage]: CPU UsageLegend2[cacheCpuUsage]:Target[cacheMemUsage]: cacheMemUsage&cacheMemUsage:public@localhost:3401 * 1024MaxBytes[cacheMemUsage]: 2000000000Title[cacheMemUsage]: Memory UsageOptions[cacheMemUsage]: gauge, growright, nopercentPageTop[cacheMemUsage]: <h1>Total memory accounted for @ zaib_squid_proxy_server</h1>YLegend[cacheMemUsage]: BytesShortLegend[cacheMemUsage]: BytesLegendI[cacheMemUsage]: Mem Usage LegendO[cacheMemUsage]:Legend1[cacheMemUsage]: Mem UsageLegend2[cacheMemUsage]:Target[cacheSysPageFaults]: cacheSysPageFaults&cacheSysPageFaults:public@localhost:3401MaxBytes[cacheSysPageFaults]: 10000000Title[cacheSysPageFaults]: Sys Page Faults @ zaib_squid_proxy_serverOptions[cacheSysPageFaults]: growright, nopercentPageTop[cacheSysPageFaults]: <h1>Sys Page Faults @ zaib_squid_proxy_server</h1>YLegend[cacheSysPageFaults]: page faults/secShortLegend[cacheSysPageFaults]: PF/sLegendI[cacheSysPageFaults]: Page Faults LegendO[cacheSysPageFaults]:Legend1[cacheSysPageFaults]: Page FaultsLegend2[cacheSysPageFaults]:Target[cacheSysVMsize]: cacheSysVMsize&cacheSysVMsize:public@localhost:3401 * 1024MaxBytes[cacheSysVMsize]: 1000000000Title[cacheSysVMsize]: Storage Mem Size @ zaib_squid_proxy_serverOptions[cacheSysVMsize]: gauge, growright, nopercentPageTop[cacheSysVMsize]: <h1>Storage Mem Size @ zaib_squid_proxy_server</h1>YLegend[cacheSysVMsize]: mem sizeShortLegend[cacheSysVMsize]: BytesLegendI[cacheSysVMsize]: Mem Size LegendO[cacheSysVMsize]:Legend1[cacheSysVMsize]: Mem SizeLegend2[cacheSysVMsize]:Target[cacheSysStorage]: cacheSysStorage&cacheSysStorage:public@localhost:3401MaxBytes[cacheSysStorage]: 1000000000Title[cacheSysStorage]: Storage Swap Size @ zaib_squid_proxy_serverOptions[cacheSysStorage]: gauge, growright, nopercentPageTop[cacheSysStorage]: <h1>Storage Swap Size @ zaib_squid_proxy_server</h1>YLegend[cacheSysStorage]: swap size (KB)ShortLegend[cacheSysStorage]: KBytesLegendI[cacheSysStorage]: Swap Size LegendO[cacheSysStorage]:Legend1[cacheSysStorage]: Swap SizeLegend2[cacheSysStorage]:Target[cacheSysNumReads]: cacheSysNumReads&cacheSysNumReads:public@localhost:3401MaxBytes[cacheSysNumReads]: 10000000Title[cacheSysNumReads]: HTTP I/O number of reads @ zaib_squid_proxy_serverOptions[cacheSysNumReads]: growright, nopercentPageTop[cacheSysNumReads]: <h1>HTTP I/O number of reads @ zaib_squid_proxy_server</h1>YLegend[cacheSysNumReads]: reads/secShortLegend[cacheSysNumReads]: reads/sLegendI[cacheSysNumReads]: I/O LegendO[cacheSysNumReads]:Legend1[cacheSysNumReads]: I/OLegend2[cacheSysNumReads]:Target[cacheCpuTime]: cacheCpuTime&cacheCpuTime:public@localhost:3401MaxBytes[cacheCpuTime]: 1000000000Title[cacheCpuTime]: Cpu TimeOptions[cacheCpuTime]: gauge, growright, nopercentPageTop[cacheCpuTime]: <h1>Amount of cpu seconds consumed @ zaib_squid_proxy_server</h1>YLegend[cacheCpuTime]: cpu secondsShortLegend[cacheCpuTime]: cpu secondsLegendI[cacheCpuTime]: Mem Time LegendO[cacheCpuTime]:Legend1[cacheCpuTime]: Mem TimeLegend2[cacheCpuTime]:Target[cacheMaxResSize]: cacheMaxResSize&cacheMaxResSize:public@localhost:3401 * 1024MaxBytes[cacheMaxResSize]: 1000000000Title[cacheMaxResSize]: Max Resident SizeOptions[cacheMaxResSize]: gauge, growright, nopercentPageTop[cacheMaxResSize]: <h1>Maximum Resident Size @ zaib_squid_proxy_server</h1>YLegend[cacheMaxResSize]: BytesShortLegend[cacheMaxResSize]: BytesLegendI[cacheMaxResSize]: Size LegendO[cacheMaxResSize]:Legend1[cacheMaxResSize]: SizeLegend2[cacheMaxResSize]:Target[cacheCurrentUnlinkRequests]: cacheCurrentUnlinkRequests&cacheCurrentUnlinkRequests:public@localhost:3401MaxBytes[cacheCurrentUnlinkRequests]: 1000000000Title[cacheCurrentUnlinkRequests]: Unlinkd RequestsOptions[cacheCurrentUnlinkRequests]: growright, nopercentPageTop[cacheCurrentUnlinkRequests]: <h1>Requests given to unlinkd @ zaib_squid_proxy_server</h1>YLegend[cacheCurrentUnlinkRequests]: requests/secShortLegend[cacheCurrentUnlinkRequests]: reqs/sLegendI[cacheCurrentUnlinkRequests]: Unlinkd requests LegendO[cacheCurrentUnlinkRequests]:Legend1[cacheCurrentUnlinkRequests]: Unlinkd requestsLegend2[cacheCurrentUnlinkRequests]:Target[cacheCurrentUnusedFileDescrCount]:

cacheCurrentUnusedFileDescrCount&cacheCurrentUnusedFileDescrCount:public@localhost:3401MaxBytes[cacheCurrentUnusedFileDescrCount]: 1000000000Title[cacheCurrentUnusedFileDescrCount]: Available File DescriptorsOptions[cacheCurrentUnusedFileDescrCount]: gauge, growright, nopercentPageTop[cacheCurrentUnusedFileDescrCount]: <h1>Available number of file descriptors @ zaib_squid_proxy_server</h1>YLegend[cacheCurrentUnusedFileDescrCount]: # of FDsShortLegend[cacheCurrentUnusedFileDescrCount]: FDsLegendI[cacheCurrentUnusedFileDescrCount]: File Descriptors LegendO[cacheCurrentUnusedFileDescrCount]:Legend1[cacheCurrentUnusedFileDescrCount]: File DescriptorsLegend2[cacheCurrentUnusedFileDescrCount]:Target[cacheCurrentReservedFileDescrCount]:

cacheCurrentReservedFileDescrCount&cacheCurrentReservedFileDescrCount:public@localhost:3401MaxBytes[cacheCurrentReservedFileDescrCount]: 1000000000Title[cacheCurrentReservedFileDescrCount]: Reserved File DescriptorsOptions[cacheCurrentReservedFileDescrCount]: gauge, growright, nopercentPageTop[cacheCurrentReservedFileDescrCount]: <h1>Reserved number of file descriptors @ zaib_squid_proxy_server</h1>YLegend[cacheCurrentReservedFileDescrCount]: # of FDsShortLegend[cacheCurrentReservedFileDescrCount]: FDsLegendI[cacheCurrentReservedFileDescrCount]: File Descriptors LegendO[cacheCurrentReservedFileDescrCount]:Legend1[cacheCurrentReservedFileDescrCount]: File DescriptorsLegend2[cacheCurrentReservedFileDescrCount]:Target[cacheClients]: cacheClients&cacheClients:public@localhost:3401MaxBytes[cacheClients]: 1000000000Title[cacheClients]: Number of ClientsOptions[cacheClients]: gauge, growright, nopercentPageTop[cacheClients]: <h1>Number of clients accessing cache @ zaib_squid_proxy_server</h1>YLegend[cacheClients]: clients/secShortLegend[cacheClients]: clients/sLegendI[cacheClients]: Num Clients LegendO[cacheClients]:Legend1[cacheClients]: Num ClientsLegend2[cacheClients]:Target[cacheHttpAllSvcTime]: cacheHttpAllSvcTime.5&cacheHttpAllSvcTime.60:public@localhost:3401MaxBytes[cacheHttpAllSvcTime]: 1000000000Title[cacheHttpAllSvcTime]: HTTP All Service TimeOptions[cacheHttpAllSvcTime]: gauge, growright, nopercentPageTop[cacheHttpAllSvcTime]: <h1>HTTP all service time @ zaib_squid_proxy_server</h1>YLegend[cacheHttpAllSvcTime]: svc time (ms)ShortLegend[cacheHttpAllSvcTime]: msLegendI[cacheHttpAllSvcTime]: Median Svc Time (5min) LegendO[cacheHttpAllSvcTime]: Median Svc Time (60min) Legend1[cacheHttpAllSvcTime]: Median Svc TimeLegend2[cacheHttpAllSvcTime]: Median Svc TimeTarget[cacheHttpMissSvcTime]: cacheHttpMissSvcTime.5&cacheHttpMissSvcTime.60:public@localhost:3401MaxBytes[cacheHttpMissSvcTime]: 1000000000Title[cacheHttpMissSvcTime]: HTTP Miss Service TimeOptions[cacheHttpMissSvcTime]: gauge, growright, nopercentPageTop[cacheHttpMissSvcTime]: <h1>HTTP miss service time @ zaib_squid_proxy_server</h1>YLegend[cacheHttpMissSvcTime]: svc time (ms)ShortLegend[cacheHttpMissSvcTime]: msLegendI[cacheHttpMissSvcTime]: Median Svc Time (5min) LegendO[cacheHttpMissSvcTime]: Median Svc Time (60min) Legend1[cacheHttpMissSvcTime]: Median Svc TimeLegend2[cacheHttpMissSvcTime]: Median Svc TimeTarget[cacheHttpNmSvcTime]: cacheHttpNmSvcTime.5&cacheHttpNmSvcTime.60:public@localhost:3401MaxBytes[cacheHttpNmSvcTime]: 1000000000Title[cacheHttpNmSvcTime]: HTTP Near Miss Service TimeOptions[cacheHttpNmSvcTime]: gauge, growright, nopercentPageTop[cacheHttpNmSvcTime]: <h1>HTTP near miss service time @ zaib_squid_proxy_server</h1>YLegend[cacheHttpNmSvcTime]: svc time (ms)ShortLegend[cacheHttpNmSvcTime]: msLegendI[cacheHttpNmSvcTime]: Median Svc Time (5min) LegendO[cacheHttpNmSvcTime]: Median Svc Time (60min) Legend1[cacheHttpNmSvcTime]: Median Svc TimeLegend2[cacheHttpNmSvcTime]: Median Svc TimeTarget[cacheHttpHitSvcTime]: cacheHttpHitSvcTime.5&cacheHttpHitSvcTime.60:public@localhost:3401MaxBytes[cacheHttpHitSvcTime]: 1000000000Title[cacheHttpHitSvcTime]: HTTP Hit Service TimeOptions[cacheHttpHitSvcTime]: gauge, growright, nopercentPageTop[cacheHttpHitSvcTime]: <h1>HTTP hit service time @ zaib_squid_proxy_server</h1>YLegend[cacheHttpHitSvcTime]: svc time (ms)ShortLegend[cacheHttpHitSvcTime]: msLegendI[cacheHttpHitSvcTime]: Median Svc Time (5min) LegendO[cacheHttpHitSvcTime]: Median Svc Time (60min) Legend1[cacheHttpHitSvcTime]: Median Svc TimeLegend2[cacheHttpHitSvcTime]: Median Svc TimeTarget[cacheIcpQuerySvcTime]: cacheIcpQuerySvcTime.5&cacheIcpQuerySvcTime.60:public@localhost:3401MaxBytes[cacheIcpQuerySvcTime]: 1000000000Title[cacheIcpQuerySvcTime]: ICP Query Service TimeOptions[cacheIcpQuerySvcTime]: gauge, growright, nopercentPageTop[cacheIcpQuerySvcTime]: <h1>ICP query service time @ zaib_squid_proxy_server</h1>YLegend[cacheIcpQuerySvcTime]: svc time (ms)ShortLegend[cacheIcpQuerySvcTime]: msLegendI[cacheIcpQuerySvcTime]: Median Svc Time (5min) LegendO[cacheIcpQuerySvcTime]: Median Svc Time (60min) Legend1[cacheIcpQuerySvcTime]: Median Svc TimeLegend2[cacheIcpQuerySvcTime]: Median Svc TimeTarget[cacheIcpReplySvcTime]: cacheIcpReplySvcTime.5&cacheIcpReplySvcTime.60:public@localhost:3401MaxBytes[cacheIcpReplySvcTime]: 1000000000Title[cacheIcpReplySvcTime]: ICP Reply Service TimeOptions[cacheIcpReplySvcTime]: gauge, growright, nopercentPageTop[cacheIcpReplySvcTime]: <h1>ICP reply service time @ zaib_squid_proxy_server</h1>YLegend[cacheIcpReplySvcTime]: svc time (ms)ShortLegend[cacheIcpReplySvcTime]: msLegendI[cacheIcpReplySvcTime]: Median Svc Time (5min) LegendO[cacheIcpReplySvcTime]: Median Svc Time (60min) Legend1[cacheIcpReplySvcTime]: Median Svc TimeLegend2[cacheIcpReplySvcTime]: Median Svc TimeTarget[cacheDnsSvcTime]: cacheDnsSvcTime.5&cacheDnsSvcTime.60:public@localhost:3401MaxBytes[cacheDnsSvcTime]: 1000000000Title[cacheDnsSvcTime]: DNS Service TimeOptions[cacheDnsSvcTime]: gauge, growright, nopercentPageTop[cacheDnsSvcTime]: <h1>DNS service time @ zaib_squid_proxy_server</h1>YLegend[cacheDnsSvcTime]: svc time (ms)ShortLegend[cacheDnsSvcTime]: msLegendI[cacheDnsSvcTime]: Median Svc Time (5min) LegendO[cacheDnsSvcTime]: Median Svc Time (60min) Legend1[cacheDnsSvcTime]: Median Svc TimeLegend2[cacheDnsSvcTime]: Median Svc TimeTarget[cacheRequestHitRatio]: cacheRequestHitRatio.5&cacheRequestHitRatio.60:public@localhost:3401MaxBytes[cacheRequestHitRatio]: 100AbsMax[cacheRequestHitRatio]: 100Title[cacheRequestHitRatio]: Request Hit Ratio @ zaib_squid_proxy_serverOptions[cacheRequestHitRatio]: absolute, gauge, noinfo, growright, nopercentUnscaled[cacheRequestHitRatio]: dwmyPageTop[cacheRequestHitRatio]: <h1>Request Hit Ratio @ zaib_squid_proxy_server</h1>YLegend[cacheRequestHitRatio]: %ShortLegend[cacheRequestHitRatio]: %LegendI[cacheRequestHitRatio]: Median Hit Ratio (5min) LegendO[cacheRequestHitRatio]: Median Hit Ratio (60min) Legend1[cacheRequestHitRatio]: Median Hit RatioLegend2[cacheRequestHitRatio]: Median Hit RatioTarget[cacheRequestByteRatio]: cacheRequestByteRatio.5&cacheRequestByteRatio.60:public@localhost:3401MaxBytes[cacheRequestByteRatio]: 100AbsMax[cacheRequestByteRatio]: 100Title[cacheRequestByteRatio]: Byte Hit Ratio @ zaib_squid_proxy_serverOptions[cacheRequestByteRatio]: absolute, gauge, noinfo, growright, nopercentUnscaled[cacheRequestByteRatio]: dwmyPageTop[cacheRequestByteRatio]: <h1>Byte Hit Ratio @ zaib_squid_proxy_server</h1>YLegend[cacheRequestByteRatio]: %ShortLegend[cacheRequestByteRatio]:%LegendI[cacheRequestByteRatio]: Median Hit Ratio (5min) LegendO[cacheRequestByteRatio]: Median Hit Ratio (60min) Legend1[cacheRequestByteRatio]: Median Hit RatioLegend2[cacheRequestByteRatio]: Median Hit RatioTarget[cacheBlockingGetHostByAddr]: cacheBlockingGetHostByAddr&cacheBlockingGetHostByAddr:public@localhost:3401MaxBytes[cacheBlockingGetHostByAddr]: 1000000000Title[cacheBlockingGetHostByAddr]: Blocking gethostbyaddrOptions[cacheBlockingGetHostByAddr]: growright, nopercentPageTop[cacheBlockingGetHostByAddr]: <h1>Blocking gethostbyaddr count @ zaib_squid_proxy_server</h1>YLegend[cacheBlockingGetHostByAddr]: blocks/secShortLegend[cacheBlockingGetHostByAddr]: blocks/sLegendI[cacheBlockingGetHostByAddr]: Blocking LegendO[cacheBlockingGetHostByAddr]:Legend1[cacheBlockingGetHostByAddr]: BlockingLegend2[cacheBlockingGetHostByAddr]: |

Then issue following command. To get graph from new file, you ahve to run the command 3 times.

env LANG=C mrtg /etc/mrtg.cfgthen create its index file so all graphs can be accesses via single index file

indexmaker /etc/mrtg.cfg –output /var/www/mrtg/index.html –columns=1 -compactNow browse to your mrtg folder via browser

http://yourboxip/mrtgand you will see your graphs in action. however it will take some time to get the data as MRTG updates its counters after very 5 minutes.

.

..

You can see more samples here…

http://chrismiles.info/unix/mrtg/squidsample/

http://chrismiles.info/unix/mrtg/mrtg-squid.cfg

.

Thank you

Syed Jahanzaib

Howto connect Squid Proxy with Mikrotik with Single Interface

Filed under: Linux Related — Tags: Mikrotik with Squid, squid client original ip, squid with single interface — Syed Jahanzaib / Pinochio~:) @ 12:20 PM

2 Votes

This short reference guide was made on request by a creature called 'Humans' living on planet earth ;)

☻

Scenario:

We want to connect Squid proxy server with mikrotik, and Squid server have only one interface.Mikrotik is running PPPoE Server and have 3 interfaces as follows

MIKROTIK INTERFACE EXAMPLE:

MIKROTIK have 3 interfaces as follows…LAN = 192.168.0.1/24

WAN = 1.1.1.1/24 (gw+dns pointing to wan link

proxy-interface = 192.168.2.1/24

PPPoE Users IP Pool = 172.16.0.1-172.16.0.255

SQUID INTERFACE EXAMPLE:

SQUID proxy have only one interface as follows…LAN (eth0) = 192.168.2.2/24

Gateway = 192.168.2.1

DNS = 192.168.2.2

.

As showed in the image below …

.

To redirect traffic from the mikrotik to Squid proxy server, we have to create a redirect rule

As showed in the example below …

.

.

Mikrotik Configuration:

CLI Version:

1

2

3

4

5

| /ip firewall natadd

action=dst-nat chain=dstnat comment="Redirect only PPPoE Users to Proxy

Server 192.168.2.2" disabled=no dst-port=80 protocol=tcp

src-address=172.16.0.1-172.16.0.255 to-addresses=192.168.2.2

to-ports=8080add action=masquerade chain=srcnat comment="Default NAT rule for Internet Access" disabled=no |

.

..

.

No IPTABLES configuration is required at squid end :D

.

Now try to browse from your client end, and you will see it in squid access.log

As showed in the image below …

DONE :)

.

.

.

TIPs and Tricks !

Just for info purposes …How to view client original ip in squid logs instead of creepy mikrotik ip

As you have noticed that using above redirect method, client traffic is successfully routed (actually natted) to Squid proxy server. But as you have noticed that squid proxy logs is showing Mikrotik IP only, so we have no idea which client is using proxy. To view client original ip address instead of mikrotik, you have to explicitly define the WAN interface in default NAT rule so that traffic send to Proxy interface should not be natted :)Mikrotik Default NAT rule configuration

As showed in the image below …

.

Now you can see its effect at squid logs

As showed in the image below …

.

.

Regard’s

☺☻♥

SYED JAHANZAIB

SKYPE – aacable79

April 21, 2014

Howto Cache Youtube with SQUID / LUSCA and bypass Cached Videos from Mikrotik Queue [April, 2014 , zaib]

Filed under: Linux Related, Mikrotik Related — Tags: aacable howto cache youtube, cache youtube with squid, cache youtube with squid lusca, howto cache youtube, Youtube, youtube pakistan — Syed Jahanzaib / Pinochio~:) @ 4:34 PM

63 Votes

LAST UPDATED: 22nd April, 2014 / 0800 hours pkst

Youtube caching is working as of 22nd april, 2014, tested and confirmed

[1st version > 11th January, 2011]

What is LUSCA / SQUID ?

LUSCA is an advance version or Fork of SQUID 2. The Lusca

project aims to fix the shortcomings in the Squid-2. It also supports a

variety of clustering protocols. By Using it, you can cache some

dynamic contents that you previously can’t do with the squid.

For example [jz]

# Video Cachingi.e Youtube / tube etc . . .

# Windows / Linux Updates / Anti-virus , Anti-Malware i.e. Avira/ Avast / MBAM etc . . .

# Well known sites i.e. facebook / google / yahoo etch. etch.

# Download caching mp3′s/mpeg/avi etc . . .

# Video Cachingi.e Youtube / tube etc . . .

# Windows / Linux Updates / Anti-virus , Anti-Malware i.e. Avira/ Avast / MBAM etc . . .

# Well known sites i.e. facebook / google / yahoo etch. etch.

# Download caching mp3′s/mpeg/avi etc . . .

Advantages of Youtube Caching !!!

In most part of the world, bandwidth is very expensive, therefore it is (in some scenarios) very useful to Cache Youtube videos or any other flash videos, so if one of user downloads video / flash file , why again the same user or other user can’t download the same file from the CACHE, why he sucking the internet pipe for same content again n again?Peoples on same LAN ,sometimes watch similar videos. If I put some youtube video link on on FACEBOOK, TWITTER or likewise , and all my friend will watch that video and that particular video gets viewed many times in few hours. Usually the videos are shared over facebook or other social networking sites so the chances are high for multiple hits per popular videos for my lan users / friends / zaib.

This is the reason why I wrote this article. I have implemented Ubuntu with LUSCA/ Squid on it and its working great, but to achieve some results you need to have some TB of storage drives in your proxy machine.

Disadvantages of Youtube Caching !!!

The chances, that another user will watch the same video, is really slim. if I search for something specific on youtube, i get more then hundreds of search results for same video. What is the chance that another user will search for the same thing, and will click on the same link / result? Youtube hosts more than 10 million videos. Which is too much to cache anyway. You need lot of space to cache videos. Also accordingly you will be needing ultra modern fast hardware with tons of RAM to handle such kind of cache giant. anyhow Try itWe will divide this article in following Sections

1# Installing SQUID / LUSCA in UBUNTU

2# Setting up SQUID / LUSCA Configuration files

3# Performing some Tests, testing your Cache HIT

4# Using ZPH TOS to deliver cached contents to clients vai mikrotik at full LAN speed, Bypassing the User Queue for cached contents.

.

.

1# Installing SQUID / LUSCA in UBUNTU

I assume your ubuntu box have 2 interfaces configured, one for LAN and second for WAN. You have internet sharing already configured. Now moving on to LUSCA / SQUID installation.Here we go ….

Issue following command. Remember that its a one go command (which have multiple commands inside it so it may take a bit long to update, install and compile all required items)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

| apt-get update &&apt-get install gcc -y &&apt-get install build-essential -y &&apt-get install libstdc++6 -y &&apt-get install unzip -y &&apt-get install bzip2 -y &&apt-get install sharutils -y &&apt-get install ccze -y &&apt-get install libzip-dev -y &&apt-get install automake1.9 -y &&apt-get install acpid -y &&apt-get install libfile-readbackwards-perl -y &&apt-get install dnsmasq -y &&cd /tmp &&tar -xvzf LUSCA_HEAD-r14942.tar.gz &&cd /tmp/LUSCA_HEAD-r14942 &&./configure \--prefix=/usr \--exec_prefix=/usr \--bindir=/usr/sbin \--sbindir=/usr/sbin \--libexecdir=/usr/lib/squid \--sysconfdir=/etc/squid \--localstatedir=/var/spool/squid \--datadir=/usr/share/squid \--enable-async-io=24 \--with-aufs-threads=24 \--with-pthreads \--enable-storeio=aufs \--enable-linux-netfilter \--enable-arp-acl \--enable-epoll \--enable-removal-policies=heap \--with-aio \--with-dl \--enable-snmp \--enable-delay-pools \--enable-htcp \--enable-cache-digests \--disable-unlinkd \--enable-large-cache-files \--with-large-files \--enable-err-languages=English \--enable-default-err-language=English \--enable-referer-log \--with-maxfd=65536 &&make &&make install |

EDIT SQUID.CONF FILE

Now edit SQUID.CONF file by using following commandnano /etc/squid/squid.confand Delete all previously lines , and paste the following lines.

Remember following squid.conf is not very neat and clean , you will find many un necessary junk entries in it, but as I didn’t had time to clean them all, so you may clean them as per your targets and goals.

Now Paste the following data … (in squid.conf)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

| ######################################################### Squid_LUSCA configuration Starts from Here ... ### Thanks to Mr. Safatah [INDO] for sharing Configs ### Syed.Jahanzaib / 22nd April, 2014 ### http://aacable.wordpress.com / aacable@hotmail.com ######################################################### HTTP Port for SQUID Servicehttp_port 8080 transparentserver_http11 on# Cache Pee, for parent proxy if you ahve any, or ignore it.#cache_peer x.x.x.x parent 8080 0# Various Logs/files locationpid_filename /var/run/squid.pidcoredump_dir /var/spool/squid/error_directory /usr/share/squid/errors/Englishicon_directory /usr/share/squid/iconsmime_table /etc/squid/mime.confaccess_log daemon:/var/log/squid/access.log squidcache_log none#debug_options ALL,1 22,3 11,2 #84,9referer_log /var/log/squid/referer.logcache_store_log nonestore_dir_select_algorithm round-robinlogfile_daemon /usr/lib/squid/logfile-daemonlogfile_rotate 1# Cache Policycache_mem 6 MBmaximum_object_size_in_memory 0 KBmemory_replacement_policy heap GDSFcache_replacement_policy heap LFUDAminimum_object_size 0 KBmaximum_object_size 10 GBcache_swap_low 98cache_swap_high 99# Cache Folder Path, using 5GB for testcache_dir aufs /cache-1 5000 16 256# ACL Sectionacl all src allacl manager proto cache_objectacl localhost src 127.0.0.1/32acl to_localhost dst 127.0.0.0/8acl localnet src 10.0.0.0/8 # RFC1918 possible internal networkacl localnet src 172.16.0.0/12 # RFC1918 possible internal networkacl localnet src 192.168.0.0/16 # RFC1918 possible internal networkacl localnet src 125.165.92.1 # RFC1918 possible internal networkacl SSL_ports port 443acl Safe_ports port 80 # httpacl Safe_ports port 21 # ftpacl Safe_ports port 443 # httpsacl Safe_ports port 70 # gopheracl Safe_ports port 210 # waisacl Safe_ports port 1025-65535 # unregistered portsacl Safe_ports port 280 # http-mgmtacl Safe_ports port 488 # gss-httpacl Safe_ports port 591 # filemakeracl Safe_ports port 777 # multiling httpacl CONNECT method CONNECTacl purge method PURGEacl snmppublic snmp_community publicacl range dstdomain .windowsupdate.comrange_offset_limit -1 KB range#===========================================================================# Loading Patchacl DENYCACHE urlpath_regex \.(ini|ui|lst|inf|pak|ver|patch|md5|cfg|lst|list|rsc|log|conf|dbd|db)$acl DENYCACHE urlpath_regex (notice.html|afs.dat|dat.asp|patchinfo.xml|version.list|iepngfix.htc|updates.txt|patchlist.txt)acl DENYCACHE urlpath_regex (pointblank.css|login_form.css|form.css|noupdate.ui|ahn.ui|3n.mh)$acl DENYCACHE urlpath_regex (Loader|gamenotice|sources|captcha|notice|reset)no_cache deny DENYCACHErange_offset_limit 1 MB !DENYCACHEuri_whitespace strip#===========================================================================# Rules to block few Advertising sitesacl ads url_regex -i .youtube\.com\/ad_frame?acl ads url_regex -i .(s|s[0-90-9])\.youtube\.comacl ads url_regex -i .googlesyndication\.comacl ads url_regex -i .doubleclick\.netacl ads url_regex -i ^http:\/\/googleads\.*acl ads url_regex -i ^http:\/\/(ad|ads|ads[0-90-9]|ads\d|kad|a[b|d]|ad\d|adserver|adsbox)\.[a-z0-9]*\.[a-z][a-z]*acl ads url_regex -i ^http:\/\/openx\.[a-z0-9]*\.[a-z][a-z]*acl ads url_regex -i ^http:\/\/[a-z0-9]*\.openx\.net\/acl ads url_regex -i ^http:\/\/[a-z0-9]*\.u-ad\.info\/acl ads url_regex -i ^http:\/\/adserver\.bs\/acl ads url_regex -i !^http:\/\/adf\.lyhttp_access deny adshttp_reply_access deny ads#deny_info http://yoursite/yourad,htm ads#==== End Rules: Advertising ====strip_query_terms offacl yutub url_regex -i .*youtube\.com\/.*$acl yutub url_regex -i .*youtu\.be\/.*$logformat squid1 %{Referer}>h %ruaccess_log /var/log/squid/yt.log squid1 yutub# ==== Custom Option REWRITE ====acl store_rewrite_list urlpath_regex \/(get_video\?|videodownload\?|videoplayback.*id)acl store_rewrite_list urlpath_regex \.(mp2|mp3|mid|midi|mp[234]|wav|ram|ra|rm|au|3gp|m4r|m4a)\?acl store_rewrite_list urlpath_regex \.(mpg|mpeg|mp4|m4v|mov|avi|asf|wmv|wma|dat|flv|swf)\?acl store_rewrite_list urlpath_regex \.(jpeg|jpg|jpe|jp2|gif|tiff?|pcx|png|bmp|pic|ico)\?acl store_rewrite_list urlpath_regex \.(chm|dll|doc|docx|xls|xlsx|ppt|pptx|pps|ppsx|mdb|mdbx)\?acl store_rewrite_list urlpath_regex \.(txt|conf|cfm|psd|wmf|emf|vsd|pdf|rtf|odt)\?acl store_rewrite_list urlpath_regex \.(class|jar|exe|gz|bz|bz2|tar|tgz|zip|gzip|arj|ace|bin|cab|msi|rar)\?acl store_rewrite_list urlpath_regex \.(htm|html|mhtml|css|js)\?acl store_rewrite_list_web url_regex ^http:\/\/([A-Za-z-]+[0-9]+)*\.[A-Za-z]*\.[A-Za-z]*acl store_rewrite_list_web_CDN url_regex ^http:\/\/[a-z]+[0-9]\.google\.com doubleclick\.netacl store_rewrite_list_path urlpath_regex \.(mp2|mp3|mid|midi|mp[234]|wav|ram|ra|rm|au|3gp|m4r|m4a)$acl store_rewrite_list_path urlpath_regex \.(mpg|mpeg|mp4|m4v|mov|avi|asf|wmv|wma|dat|flv|swf)$acl store_rewrite_list_path urlpath_regex \.(jpeg|jpg|jpe|jp2|gif|tiff?|pcx|png|bmp|pic|ico)$acl store_rewrite_list_path urlpath_regex \.(chm|dll|doc|docx|xls|xlsx|ppt|pptx|pps|ppsx|mdb|mdbx)$acl store_rewrite_list_path urlpath_regex \.(txt|conf|cfm|psd|wmf|emf|vsd|pdf|rtf|odt)$acl store_rewrite_list_path urlpath_regex \.(class|jar|exe|gz|bz|bz2|tar|tgz|zip|gzip|arj|ace|bin|cab|msi|rar)$acl store_rewrite_list_path urlpath_regex \.(htm|html|mhtml|css|js)$acl getmethod method GETstoreurl_access deny !getmethod#this is not related to youtube video its only for CDN picturesstoreurl_access allow store_rewrite_list_web_CDNstoreurl_access allow store_rewrite_list_web store_rewrite_list_pathstoreurl_access allow store_rewrite_liststoreurl_access deny allstoreurl_rewrite_program /etc/squid/storeurl.plstoreurl_rewrite_children 10storeurl_rewrite_concurrency 40# ==== End Custom Option REWRITE ====#===========================================================================# Custom Option REFRESH PATTERN#===========================================================================refresh_pattern

(get_video\?|videoplayback\?|videodownload\?|\.flv\?|\.fid\?) 43200 99%

43200 override-expire ignore-reload ignore-must-revalidate

ignore-privaterefresh_pattern

-i (get_video\?|videoplayback\?|videodownload\?) 5259487 999% 5259487

override-expire ignore-reload reload-into-ims ignore-no-cache

ignore-private# -- refresh pattern for specific sites -- #refresh_pattern ^http://*.jobstreet.com.*/.* 720 100% 10080 override-expire override-lastmod ignore-no-cacherefresh_pattern ^http://*.indowebster.com.*/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-reload ignore-no-cache ignore-authrefresh_pattern ^http://*.21cineplex.*/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-reload ignore-no-cache ignore-authrefresh_pattern ^http://*.atmajaya.*/.* 720 100% 10080 override-expire ignore-no-cache ignore-authrefresh_pattern ^http://*.kompas.*/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.theinquirer.*/.* 720 100% 10080 override-expire ignore-no-cache ignore-authrefresh_pattern ^http://*.blogspot.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.wordpress.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cacherefresh_pattern ^http://*.photobucket.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.tinypic.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.imageshack.us/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.kaskus.*/.* 720 100% 28800 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://www.kaskus.com/.* 720 100% 28800 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.detik.*/.* 720 50% 2880 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.detiknews.*/*.* 720 50% 2880 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://video.liputan6.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://static.liputan6.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.friendster.com/.* 720 100% 10080 override-expire override-lastmod ignore-no-cache ignore-authrefresh_pattern ^http://*.facebook.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://apps.facebook.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.fbcdn.net/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://profile.ak.fbcdn.net/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://static.playspoon.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://cooking.game.playspoon.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern -i http://[^a-z\.]*onemanga\.com/? 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://media?.onemanga.com/.* 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.yahoo.com/.* 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.google.com/.* 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.forummikrotik.com/.* 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-authrefresh_pattern ^http://*.linux.or.id/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth# -- refresh pattern for extension -- #refresh_pattern

-i \.(mp2|mp3|mid|midi|mp[234]|wav|ram|ra|rm|au|3gp|m4r|m4a)(\?.*|$)

5259487 999% 5259487 override-expire ignore-reload reload-into-ims

ignore-no-cache ignore-privaterefresh_pattern

-i \.(mpg|mpeg|mp4|m4v|mov|avi|asf|wmv|wma|dat|flv|swf)(\?.*|$) 5259487

999% 5259487 override-expire ignore-reload reload-into-ims

ignore-no-cache ignore-privaterefresh_pattern

-i \.(jpeg|jpg|jpe|jp2|gif|tiff?|pcx|png|bmp|pic|ico)(\?.*|$) 5259487

999% 5259487 override-expire ignore-reload reload-into-ims

ignore-no-cache ignore-privaterefresh_pattern

-i \.(chm|dll|doc|docx|xls|xlsx|ppt|pptx|pps|ppsx|mdb|mdbx)(\?.*|$)

5259487 999% 5259487 override-expire ignore-reload reload-into-ims

ignore-no-cache ignore-privaterefresh_pattern

-i \.(txt|conf|cfm|psd|wmf|emf|vsd|pdf|rtf|odt)(\?.*|$) 5259487 999%

5259487 override-expire ignore-reload reload-into-ims ignore-no-cache

ignore-privaterefresh_pattern

-i

\.(class|jar|exe|gz|bz|bz2|tar|tgz|zip|gzip|arj|ace|bin|cab|msi|rar)(\?.*|$)

5259487 999% 5259487 override-expire ignore-reload reload-into-ims

ignore-no-cache ignore-privaterefresh_pattern -i \.(htm|html|mhtml|css|js)(\?.*|$) 1440 90% 86400 override-expire ignore-reload reload-into-ims#===========================================================================refresh_pattern -i (/cgi-bin/|\?) 0 0% 0refresh_pattern ^gopher: 1440 0% 1440refresh_pattern ^ftp: 10080 95% 10080 override-lastmod reload-into-imsrefresh_pattern . 0 20% 10080 override-lastmod reload-into-imshttp_access allow manager localhosthttp_access deny managerhttp_access allow purge localhosthttp_access deny !Safe_portshttp_access deny CONNECT !SSL_portshttp_access allow localnethttp_access allow allhttp_access deny allicp_access allow localneticp_access deny allicp_port 0buffered_logs onacl shoutcast rep_header X-HTTP09-First-Line ^ICY.[0-9]upgrade_http0.9 deny shoutcastacl apache rep_header Server ^Apachebroken_vary_encoding allow apacheforwarded_for offheader_access From deny allheader_access Server deny allheader_access Link deny allheader_access Via deny allheader_access X-Forwarded-For deny allhttpd_suppress_version_string onshutdown_lifetime 10 secondssnmp_port 3401snmp_access allow snmppublic alldns_timeout 1 minutesdns_nameservers 8.8.8.8 8.8.4.4fqdncache_size 5000 #16384ipcache_size 5000 #16384ipcache_low 98ipcache_high 99log_fqdn offlog_icp_queries offmemory_pools offmaximum_single_addr_tries 2retry_on_error onicp_hit_stale onstrip_query_terms offquery_icmp onreload_into_ims onemulate_httpd_log offnegative_ttl 0 secondspipeline_prefetch onvary_ignore_expire onhalf_closed_clients offhigh_page_fault_warning 2nonhierarchical_direct onprefer_direct offcache_mgr aacable@hotmail.comcache_effective_user proxycache_effective_group proxyvisible_hostname proxy.zaibunique_hostname syed_jahanzaibcachemgr_passwd none allclient_db onmax_filedescriptors 8192# ZPH config Marking Cache Hit, so cached contents can be delivered at full lan speed via MTzph_mode toszph_local 0x30zph_parent 0zph_option 136 |

.

SOTEURL.PL

Now We have to create an important file name storeurl.pl , which is very important and actually it does themain job to redirect and pull video from cache.

1

2

3

| touch /etc/squid/storeurl.plchmod +x /etc/squid/storeurl.plnano /etc/squid/storeurl.pl |

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

| #!/usr/bin/perl######################################################### Squid_LUSCA storeurl.pl starts from Here ... ### Thanks to Mr. Safatah [INDO] for sharing Configs ### Syed.Jahanzaib / 22nd April, 2014 ### http://aacable.wordpress.com / aacable@hotmail.com ########################################################$|=1;while (<>) {@X = split;$x = $X[0] . " ";##=================## Encoding YOUTUBE##=================if ($X[1] =~ m/^http\:\/\/.*(youtube|google).*videoplayback.*/){@itag = m/[&?](itag=[0-9]*)/;@CPN = m/[&?]cpn\=([a-zA-Z0-9\-\_]*)/;@IDS = m/[&?]id\=([a-zA-Z0-9\-\_]*)/;$id = &GetID($CPN[0], $IDS[0]);@range = m/[&?](range=[^\&\s]*)/;print $x . "http://fathayu/" . $id . "&@itag@range\n";} elsif ($X[1] =~ m/(youtube|google).*videoplayback\?/ ){@itag = m/[&?](itag=[0-9]*)/;@id = m/[&?](id=[^\&]*)/;@redirect = m/[&?](redirect_counter=[^\&]*)/;print $x . "http://fathayu/";# ==========================================================================# VIMEO# ==========================================================================} elsif ($X[1] =~ m/^http:\/\/av\.vimeo\.com\/\d+\/\d+\/(.*)\?/) {print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/pdl\.vimeocdn\.com\/\d+\/\d+\/(.*)\?/) {print $x . "http://fathayu/" . $1 . "\n";# ==========================================================================# DAILYMOTION# ==========================================================================} elsif ($X[1] =~ m/^http:\/\/proxy-[0-9]{1}\.dailymotion\.com\/(.*)\/(.*)\/video\/\d{3}\/\d{3}\/(.*.flv)/) {print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/vid[0-9]\.ak\.dmcdn\.net\/(.*)\/(.*)\/video\/\d{3}\/\d{3}\/(.*.flv)/) {print $x . "http://fathayu/" . $1 . "\n";# ==========================================================================# YIMG# ==========================================================================} elsif ($X[1] =~ m/^http:\/\/(.*yimg.com)\/\/(.*)\/([^\/\?\&]*\/[^\/\?\&]*\.[^\/\?\&]{3,4})(\?.*)?$/) {print $x . "http://fathayu/" . $3 . "\n";# ==========================================================================# YIMG DOUBLE# ==========================================================================} elsif ($X[1] =~ m/^http:\/\/(.*?)\.yimg\.com\/(.*?)\.yimg\.com\/(.*?)\?(.*)/) {print $x . "http://fathayu/" . $3 . "\n";# ==========================================================================# YIMG WITH &sig=# ==========================================================================} elsif ($X[1] =~ m/^http:\/\/(.*?)\.yimg\.com\/(.*)/) {@y = ($1,$2);$y[0] =~ s/[a-z]+[0-9]+/cdn/;$y[1] =~ s/&sig=.*//;print $x . "http://fathayu/" . $y[0] . ".yimg.com/" . $y[1] . "\n";# ==========================================================================# YTIMG# ==========================================================================} elsif ($X[1] =~ m/^http:\/\/i[1-4]\.ytimg\.com(.*)/) {print $x . "http://fathayu/" . $1 . "\n";# ==========================================================================# PORN Movies# ==========================================================================} elsif (($X[1] =~ /maxporn/) && (m/^http:\/\/([^\/]*?)\/(.*?)\/([^\/]*?)(\?.*)?$/)) {print $x . "http://" . $1 . "/SQUIDINTERNAL/" . $3 . "\n";# Domain/path/.*/path/filename}

elsif (($X[1] =~ /fucktube/) &&

(m/^http:\/\/(.*?)(\.[^\.\-]*?[^\/]*\/[^\/]*)\/(.*)\/([^\/]*)\/([^\/\?\&]*)\.([^\/\?\&]{3,4})(\?.*?)$/))

{@y = ($1,$2,$4,$5,$6);$y[0] =~ s/(([a-zA-A]+[0-9]+(-[a-zA-Z])?$)|([^\.]*cdn[^\.]*)|([^\.]*cache[^\.]*))/cdn/;print $x . "http://" . $y[0] . $y[1] . "/" . $y[2] . "/" . $y[3] . "." . $y[4] . "\n";# Like porn hub variables url and center part of the path, filename etention 3 or 4 with or without ? at the end}

elsif (($X[1] =~ /tube8|pornhub|xvideos/) &&

(m/^http:\/\/(([A-Za-z]+[0-9-.]+)*?(\.[a-z]*)?)\.([a-z]*[0-9]?\.[^\/]{3}\/[a-z]*)(.*?)((\/[a-z]*)?(\/[^\/]*){4}\.[^\/\?]{3,4})(\?.*)?$/))

{print $x . "http://cdn." . $4 . $6 . "\n";}

elsif (($u =~

/tube8|redtube|hardcore-teen|pornhub|tubegalore|xvideos|hostedtube|pornotube|redtubefiles/)

&&

(m/^http:\/\/(([A-Za-z]+[0-9-.]+)*?(\.[a-z]*)?)\.([a-z]*[0-9]?\.[^\/]{3}\/[a-z]*)(.*?)((\/[a-z]*)?(\/[^\/]*){4}\.[^\/\?]{3,4})(\?.*)?$/))

{print $x . "http://cdn." . $4 . $6 . "\n";# acl store_rewrite_list url_regex -i \.xvideos\.com\/.*(3gp|mpg|flv|mp4)#

refresh_pattern -i \.xvideos\.com\/.*(3gp|mpg|flv|mp4) 1440 99% 14400

override-expire override-lastmod ignore-no-cache ignore-private

reload-into-ims ignore-must-revalidate ignore-reload store-stale# ==========================================================================} elsif ($X[1] =~ m/^http:\/\/.*\.xvideos\.com\/.*\/([\w\d\-\.\%]*\.(3gp|mpg|flv|mp4))\?.*/){print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/[\d]+\.[\d]+\.[\d]+\.[\d]+\/.*\/xh.*\/([\w\d\-\.\%]*\.flv)/){print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/[\d]+\.[\d]+\.[\d]+\.[\d]+.*\/([\w\d\-\.\%]*\.flv)\?start=0/){print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/.*\.youjizz\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp))\?.*/){print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.keezmovies[\w\d\-\.\%]*\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/){print $x . "http://fathayu/" . $1 . $2 . "\n";} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.tube8[\w\d\-\.\%]*\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/) {print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.youporn[\w\d\-\.\%]*\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/){print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.spankwire[\w\d\-\.\%]*\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/) {print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.pornhub[\w\d\-\.\%]*\.com.*\/([[\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/){print $x . "http://fathayu/" . $1 . "\n";} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\_\.\%\/]*.*\/([\w\d\-\_\.]+\.(flv|mp3|mp4|3gp|wmv))\?.*cdn\_hash.*/){print $x . "http://fathayu/" . $1 . "\n";} elsif (($X[1] =~ /maxporn/) && (m/^http:\/\/([^\/]*?)\/(.*?)\/([^\/]*?)(\?.*)?$/)) {print $x . "http://fathayu/" . $1 . "/SQUIDINTERNAL/" . $3 . "\n";}

elsif (($X[1] =~ /fucktube/) &&

(m/^http:\/\/(.*?)(\.[^\.\-]*?[^\/]*\/[^\/]*)\/(.*)\/([^\/]*)\/([^\/\?\&]*)\.([^\/\?\&]{3,4})(\?.*?)$/))

{@y = ($1,$2,$4,$5,$6);$y[0] =~ s/(([a-zA-Z]+[0-9]+(-[a-zA-Z])?$)|([^\.]*cdn[^\.]*)|([^\.]*cache[^\.]*))/cdn/;print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "/" . $y[3] . "." . $y[4] . "\n";}

elsif (($X[1] =~ /media[0-9]{1,5}\.youjizz/) &&

(m/^http:\/\/(.*?)(\.[^\.\-]*?\.[^\/]*)\/(.*)\/([^\/\?\&]*)\.([^\/\?\&]{3,4})(\?.*?)$/))

{@y = ($1,$2,$4,$5);$y[0] =~ s/(([a-zA-Z]+[0-9]+(-[a-zA-Z])?$)|([^\.]*cdn[^\.]*)|([^\.]*cache[^\.]*))/cdn/;print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "." . $y[3] . "\n";# ==========================================================================# Filehippo# ==========================================================================} elsif (($X[1] =~ /filehippo/) && (m/^http:\/\/(.*?)\.(.*?)\/(.*?)\/(.*)\.([a-z0-9]{3,4})(\?.*)?/)) {@y = ($1,$2,$4,$5);$y[0] =~ s/[a-z0-9]{2,5}/cdn./;print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "." . $y[3] . "\n";} elsif (($X[1] =~ /filehippo/) && (m/^http:\/\/(.*?)(\.[^\/]*?)\/(.*?)\/([^\?\&\=]*)\.([\w\d]{2,4})\??.*$/)) {@y = ($1,$2,$4,$5);$y[0] =~ s/([a-z][0-9][a-z]dlod[\d]{3})|((cache|cdn)[-\d]*)|([a-zA-Z]+-?[0-9]+(-[a-zA-Z]*)?)/cdn/;print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "." . $y[3] . "\n";} elsif ($X[1] =~ m/^http:\/\/.*filehippo\.com\/.*\/([\d\w\%\.\_\-]+\.(exe|zip|cab|msi|mru|mri|bz2|gzip|tgz|rar|pdf))/){$y=$1;for ($y) {s/%20//g;}print $x . "http://fathayu//" . $y . "\n";} elsif (($X[1] =~ /filehippo/) && (m/^http:\/\/(.*?)\.(.*?)\/(.*?)\/(.*)\.([a-z0-9]{3,4})(\?.*)?/)) {@y = ($1,$2,$4,$5);$y[0] =~ s/[a-z0-9]{2,5}/cdn./;print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "." . $y[3] . "\n";# ==========================================================================# 4shared preview# ==========================================================================}

elsif ($X[1] =~

m/^http:\/\/[a-z]{2}\d{3}\.4shared\.com\/img\/\d+\/\w+\/dlink__2Fdownload_2F.*_3Ftsid_(\w+)-\d+-\w+_26lgfp_3D1000_26sbsr_\w+\/preview.mp3/)

{print $x . "http://fathayu/" . $3 . "\n";} else {print $x . $X[1] . "\n";}}sub GetID{$id = "";use File::ReadBackwards;my $lim = 200 ;my $ref_log = File::ReadBackwards->new('/var/log/squid/referer.log');while (defined($line = $ref_log->readline)){if ($line =~ m/.*youtube.*\/watch\?.*v=([a-zA-Z0-9\-\_]*).*\s.*id=$IDS[0].*/){$id = $1;last;}if ($line =~ m/.*youtube.*\/.*cpn=$CPN[0].*[&](video_id|docid|v)=([a-zA-Z0-9\-\_]*).*/){$id = $2;last;}if ($line =~ m/.*youtube.*\/.*[&?](video_id|docid|v)=([a-zA-Z0-9\-\_]*).*cpn=$CPN[0].*/){$id = $2;last;}last if --$lim <= 0;}if ($id eq ""){$id = $IDS[0];}$ref_log->close();return $id;}### STOREURL.PL ENDS HERE ### |

INITIALIZE CACHE and LOG FOLDER …

Now create CACHE folder (here I used test in local drive)

1

2

3

4

5

6

7

8

9

| # Log Folder and assign permissionsmkdir /var/log/squidchown proxy:proxy /var/log/squid/# Cache Foldermkdir /cache-1chown proxy:proxy /cache-1#Now initialize cache dir bysquid -z |

START SQUID SERVICE

Now start SQUID service by following command

1

| squid |

to verify if squid is running ok issue following command and look for squid instance, there should be 10+ instance for the squid process

ps aux |grep squidSomething like below …

TIP:

To start SQUID Server in Debug mode, to check any erros, use following

1

| squid -d1N |

TEST time ….

It’s time to hit the ROAD and do some tests….YOUTUBE TEST

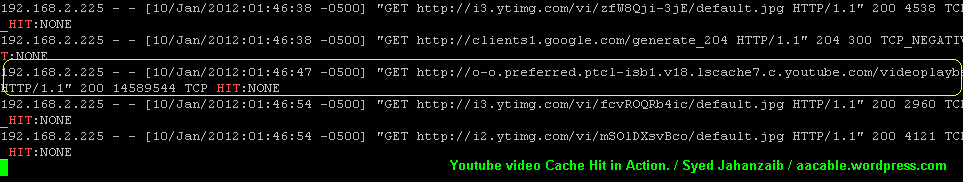

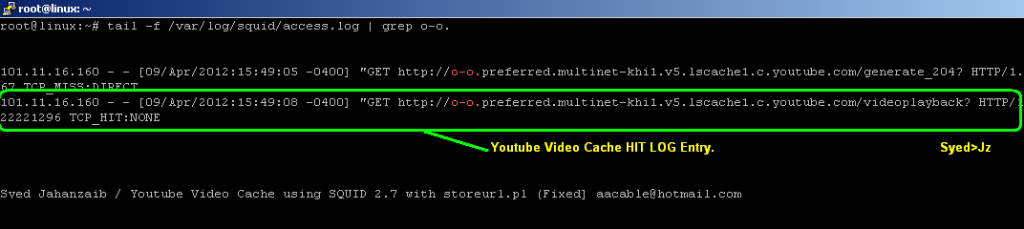

Open Youtube and watch any Video. After complete download, Check the same video from another client. You will notice that it download very quickly (youtueb video is saved in chunks of 1.7mb each, so after completing first chunk, it will stop, if a user continue to watch the same video, it will serve second chunk and so on , you can watch the bar moving fast without using internet data.As Shown in the example Below . . .

.

. .

..

FILEHIPPO TEST [ZAIB]

As Shown in the example Below . . .

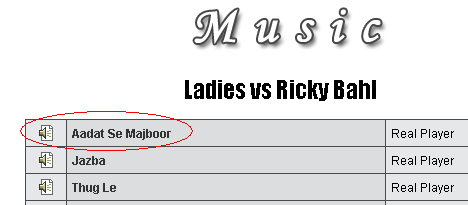

MUSIC DOWNLOAD TEST

Now test any music download. For example Go tohttp://www.apniisp.com/songs/indian-movie-songs/ladies-vs-ricky-bahl/690/1.html

As Shown in the example Below . . .

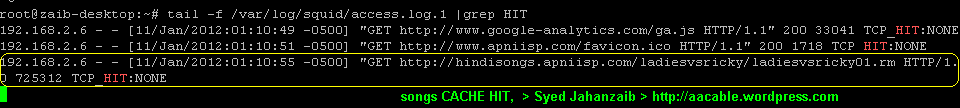

and download any song , after its downloaded, goto 2nd client pc, and download the same song, and monitor the Squid access LOG. You will see cache hit TPC_HIT for this song.

As Shown in the example Below . . .

EXE / PROGRAM DOWNLOAD TEST

Now test any .exe file download.Goto http://www.rarlabs.com and download any package. After Download completes, goto 2nd client pc , and download the same file again. and monitor the Squid access LOG. You will see cache hit TPC_HIT for this file.

As Shown in the example Below . . .

SQUID LOGS

Other methods are as follow (I will update following (squid 2.7) article soon)

http://aacable.wordpress.com/2012/01/19/youtube-caching-with-squid-2-7-using-storeurl-pl/

http://aacable.wordpress.com/2012/08/13/youtube-caching-with-squid-nginx/

.

.

.

MIKROTIK with SQUID/ZPH: how to bypass Squid Cache HIT object with Queues Tree in RouterOS 5.x and 6.x

.

.

Using Mikrotik, we can redirect HTTP traffic to SQUID

proxy Server, We can also control user bandwidth, but its a good idea

to deliver the already cached content to user at full lan speed, that’s

why we setup cache server for, to save bandwidth and have fast browsing

experience , right :p , So how can we do it in mikrotik that cache

content should be delivered to users at unlimited speed, no queue on

cache content. Here we go.

By using ZPH directives , we will mark cache content, so that it can later pick by Mikrotik.

Basic requirement is that Squid must be running in transparent mode, can be done via iptables and squid.conf directives.I am using UBUNTU squid 2.7 , (in ubuntu , apt-get install squid will install squid 2.7 by default which is gr8 for our work)

Add these lines in SQUID.CONF

1

2

3

4

5

6

7

8

| #===============================================================================#ZPH for SQUID 2.7 (Default in ubuntu 10.4) / Syed Jahanzaib aacable@hotmail.com#===============================================================================tcp_outgoing_tos 0x30 lanuser [lanuser is ACL for local network, change it to match your's]zph_mode toszph_local 0x30zph_parent 0zph_option 136 |

1

2

3

4

5

6

| #======================================================#ZPH for SQUID 3.1.19 (Default in ubuntu 12.4) / Syed Jahanzaib aacable@hotmail.com#======================================================# ZPH for Squid 3.1.19qos_flows local-hit=0x30 |

Add following rules,

↓

↓

# Marking packets with DSCP (for MT 5.x) for cache hit content coming from SQUID Proxy

1

2

| /ip

firewall mangle add action=mark-packet chain=prerouting disabled=no

dscp=12 new-packet-mark=proxy-hit passthrough=no comment="Mark Cache Hit

Packets / aacable@hotmail.com"/queue

tree add burst-limit=0 burst-threshold=0 burst-time=0s disabled=no

limit-at=0 max-limit=0 name=pmark packet-mark=proxy-hit

parent=global-out priority=8 queue=default |

↓

# Marking packets with DSCP (for MT 6.x) for cache hit content coming from SQUID Proxy

1

2

3

| /ip

firewall mangle add action=mark-packet chain=prerouting

comment="MARK_CACHE_HIT_FROM_PROXY_ZAIB" disabled=no dscp=12

new-packet-mark=proxy passthrough=no/queue simpleadd

max-limit=100M/100M name="ZPH-Proxy Cache Hit Simple Queue / Syed

Jahanzaib >aacable@hotmail.com" packet-marks=zph-hit priority=1/1

target="" total-priority=1 |

.

↓

↓

TROUBLESHOOTING:

the above config is fully tested with UBUNTU SQUID 2.7 and FEDORA 10 with LUSCAMake sure your squid is marking TOS for cache hit packets. You can check it via TCPDUMP

tcpdump -vni eth0 | grep ‘tos 0×30′↓

(eht0 = LAN connected interface)

Can you see something like ???

tcpdump: listening on eth0, link-type EN10MB (Ethernet), capture size 96 bytes

20:25:07.961722 IP (tos 0×30, ttl 64, id 45167, offset 0, flags [DF], proto TCP (6), length 409)

20:25:07.962059 IP (tos 0×30, ttl 64, id 45168, offset 0, flags [DF], proto TCP (6), length 1480)

192 packets captured

195 packets received by filter

0 packets dropped by kernel

_________________________________

↓

↓

Regard’s

Waseem Raaj

Regard’s

Waseem Raaj

- Get link

- X

- Other Apps

Comments

Post a Comment